in this article

By Rachel Tompa, Ph.D. / Allen Institute

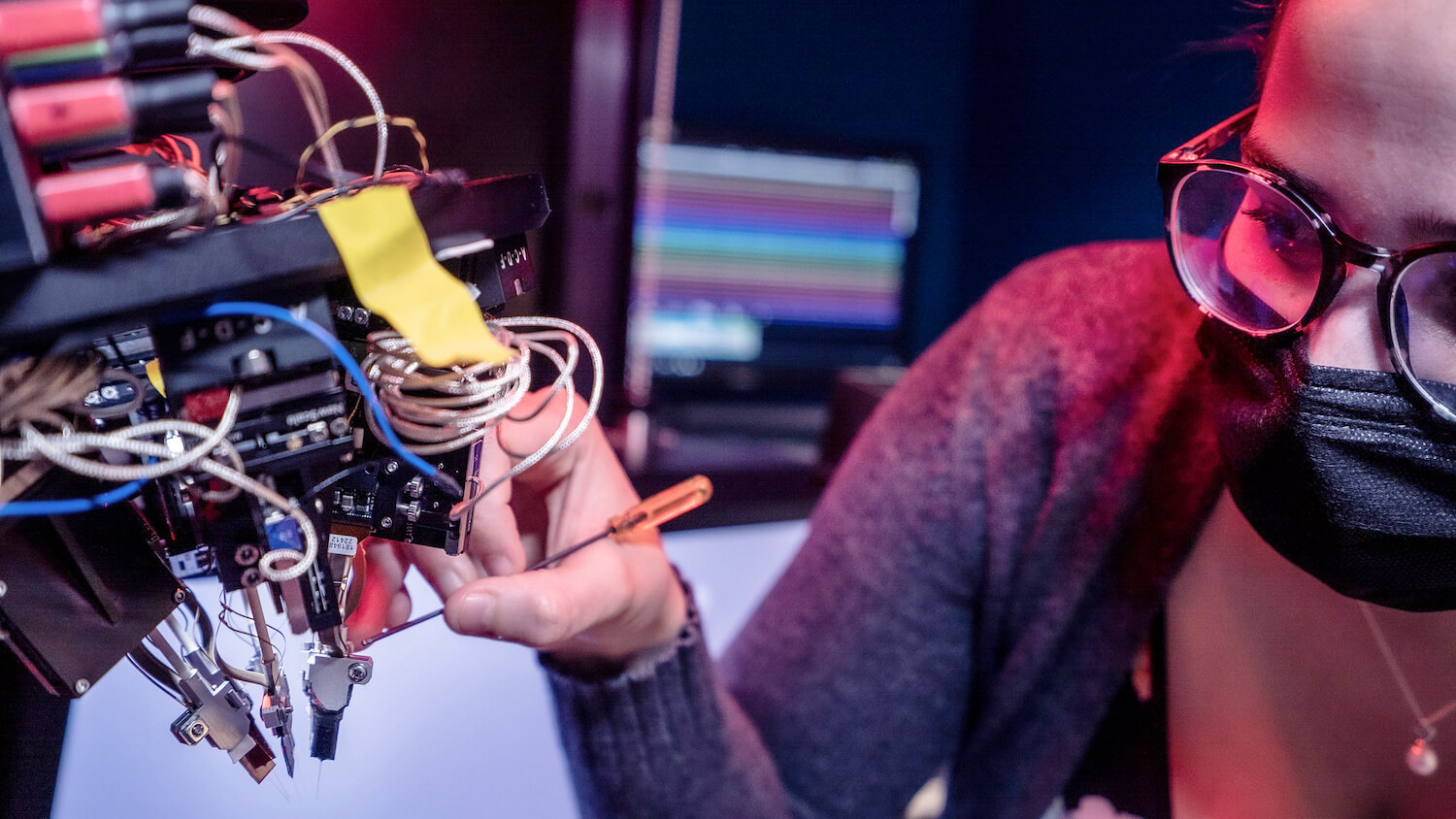

The mouse waits for the experiment to start. Its legs jitter as it runs on a specialized treadmill. Its tail is straight and high in the air.

Finally, the scientists are ready. They gently swing a monitor in front of its face and lower a small blind, shielding its peripheral vision from distractions. The mouse is now alone inside what is effectively a black box, focusing on changing images on the large computer monitor in front of him, and on a tiny waterspout near his mouth.

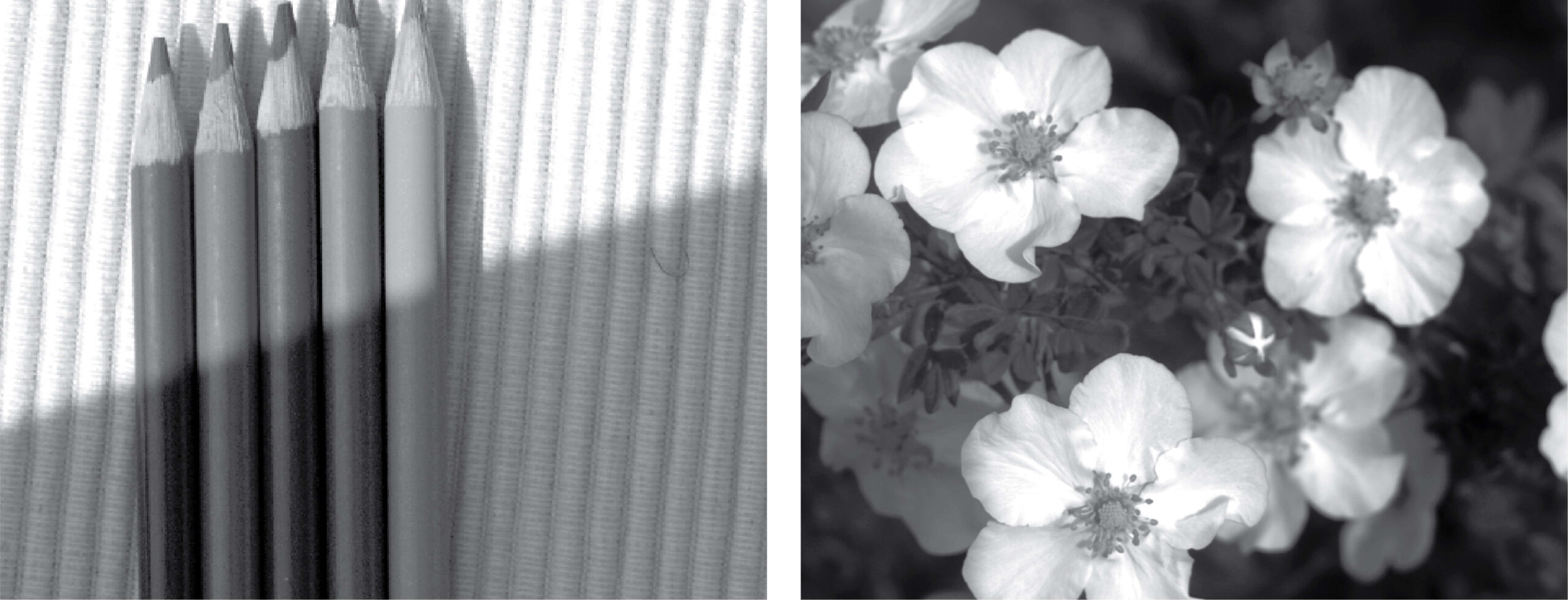

He could use a drink, but he’s been trained to lick the spout only when the image in front of his face changes, so he waits a little longer. An image of pencils flashes in front of his eyes: pencils, pencils, pencils, then suddenly a flower appears — he immediately licks and gets some water.

“What’s amazing is people don’t really think of mice as very smart, but they can do really complex tasks,” said Séverine Durand, Ph.D., a neuroscientist in the Allen Institute’s MindScope Program who’s overseeing the experiments.

As the animal performs its task, six super-thin silicon probes known as Neuropixels record electrical chatter from more than a thousand neurons in action across several areas of the mouse’s brain. The scientists sit just outside the black box, watching on their own sets of monitors as colorful waveforms appear from the animal’s brain activity.

The Allen Institute team just released a dataset full of electrical information like this, the largest dataset of Neuropixels recordings ever collected. The publicly available dataset contains detailed information from more than 300,000 mouse neurons in action collected as the animals performed their trained task — licking a waterspout when they see a changed image, also known as an oddball task.

These data represent billions of split-second electrical pulses that comprise the brain’s language of information. From this massive collection of cellular activity, the scientists hope to decode the neural computations that underlie behavior.

The 300,000 brain cells are just a drop in the bucket of the mouse brain’s 75 million total neurons, but it’s a much larger drop than anyone has ever collected in a single set of standardized experiments. Before the advent of technologies like Neuropixels, scientists could only eavesdrop on handfuls of single neurons at a time. It’s like trying to deduce the rules of an unknown sports game by just watching one player, said Allen Institute neuroscientist Corbett Bennett, Ph.D., who was part of the team that led the creation of the dataset.

“People have discovered really valuable, interesting things with that method. But now that we can record from 1,000 neurons in every experiment, we’re seeing so much more of the field, and we can determine a few more pieces of the rules of the game,” Bennett said. “Of course, in this case there are millions of players, so it’s complicated.”

‘The footprint of perception’

While a mouse’s ability to tell pencils from flowers might seem esoteric, the scientists are hoping to extrapolate broader information from the dataset. The suite of electrical activity represents what they call a “perception-action cycle” — how what is perceived leads to an action. The data encapsulates how the brain processes visual information that comes from the eyes, how the animal makes sense of what the mouse sees, how the mouse decides to take an action (to lick or not to lick) and how it translates that decision into movement.

“We’re hoping to capture the footprint of perception in this neural activity,” said Shawn Olsen, Ph.D., an investigator in the Allen Institute’s MindScope Program who helped lead the data collection and analysis.

The dataset includes activity gathered from a few dozen different regions of the brain, most of which are involved in processing visual information. In the next phase of data collection, the team plans to expand to other areas of the brain, such as those that coordinate movement. The dataset also includes neural activity from mice who were viewing images but weren’t asked to perform any tasks, as well as mice exposed to completely new images. How the brain distinguishes novelty from familiar images is a fascinating but still poorly understood process, Olsen said.

“We’ve tried to incorporate multiple interesting features, so that other scientists can analyze different aspects of the dataset,” Olsen said. “It’s not designed for just one question.”

Citations

about the allen institute

Allen Institute is a 501(c)(3) nonprofit medical research organization dedicated to accelerating science for a healthier world. Through large-scale, multidisciplinary research initiatives, the Institute generates foundational knowledge, data, tools, and models that are shared openly with the world to advance our understanding of life and health. Founded by Jody Allen and the late Paul G. Allen, Allen Institute is supported primarily by the Fund for Science and Technology.