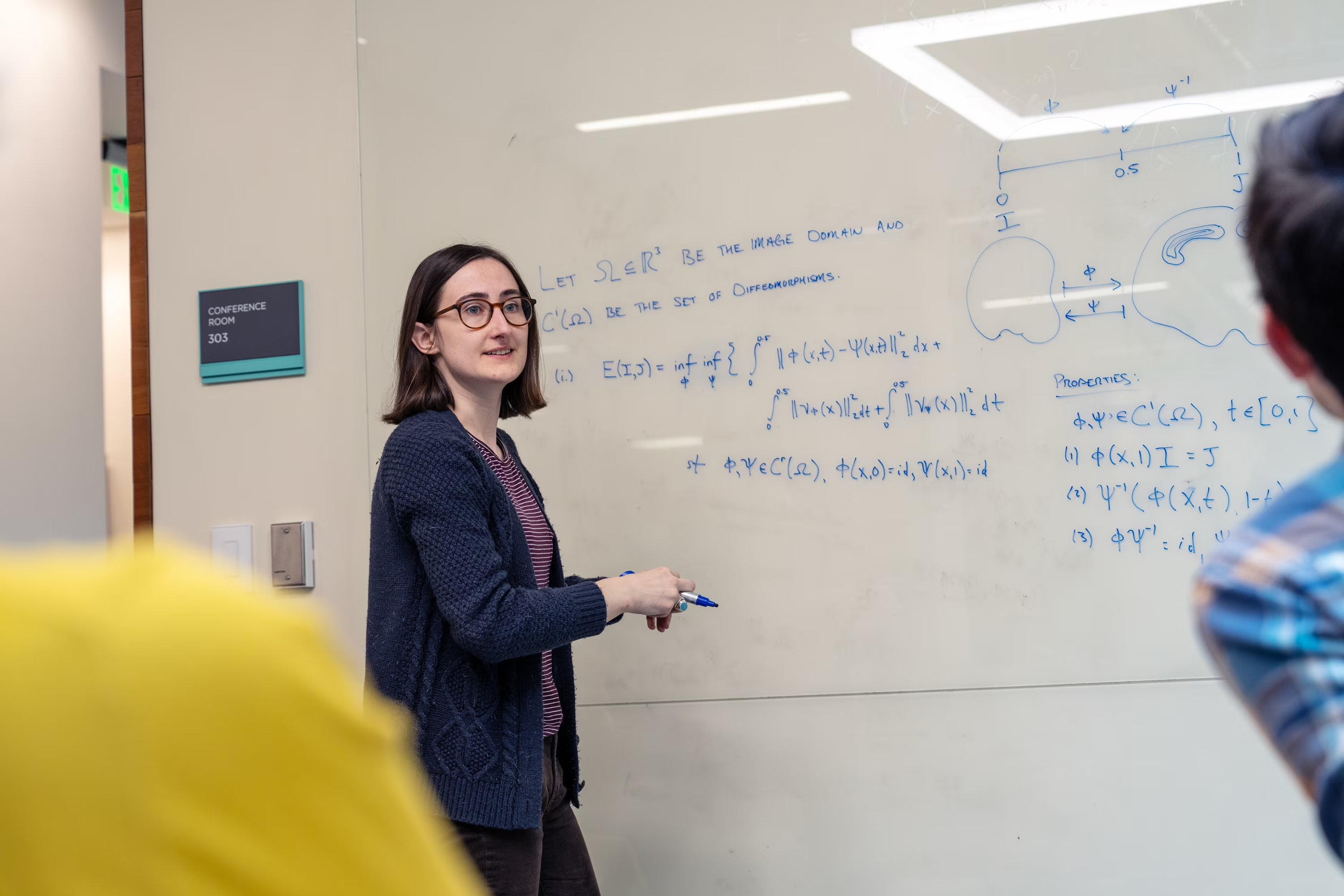

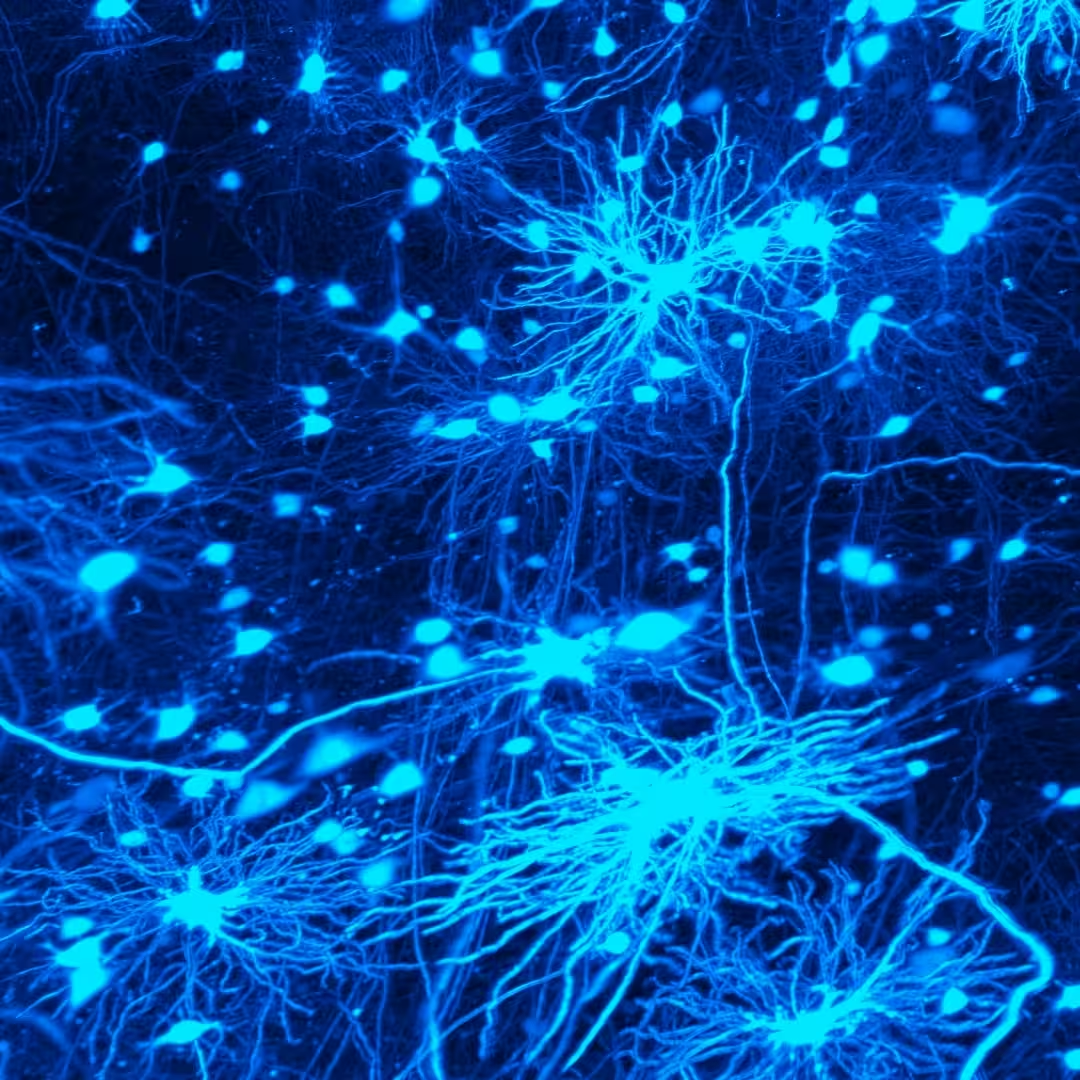

neural dynamics

Every decision, every habit, every learned skill starts with patterns of activity in your brain.

We can observe behavior: what a person or animal does in any given moment. What we can't easily see is the cascading neural activity in the brain that produced it. Bridging that gap is the defining challenge of neuroscience, and it requires studying all the pieces of the brain at once.

We study how animals make flexible, real-world decisions like foraging for resources while minimizing effort and avoiding danger. These kinds of behaviors recruit almost every major brain system. By recording neural activity across the whole brain and building mathematical models that tie it to behavior, we're discovering the algorithms that let the brain learn, generalize, and adapt. With this powerful new perspective, our open datasets and tools will seed an entirely new generation of neuroscience.

research projects

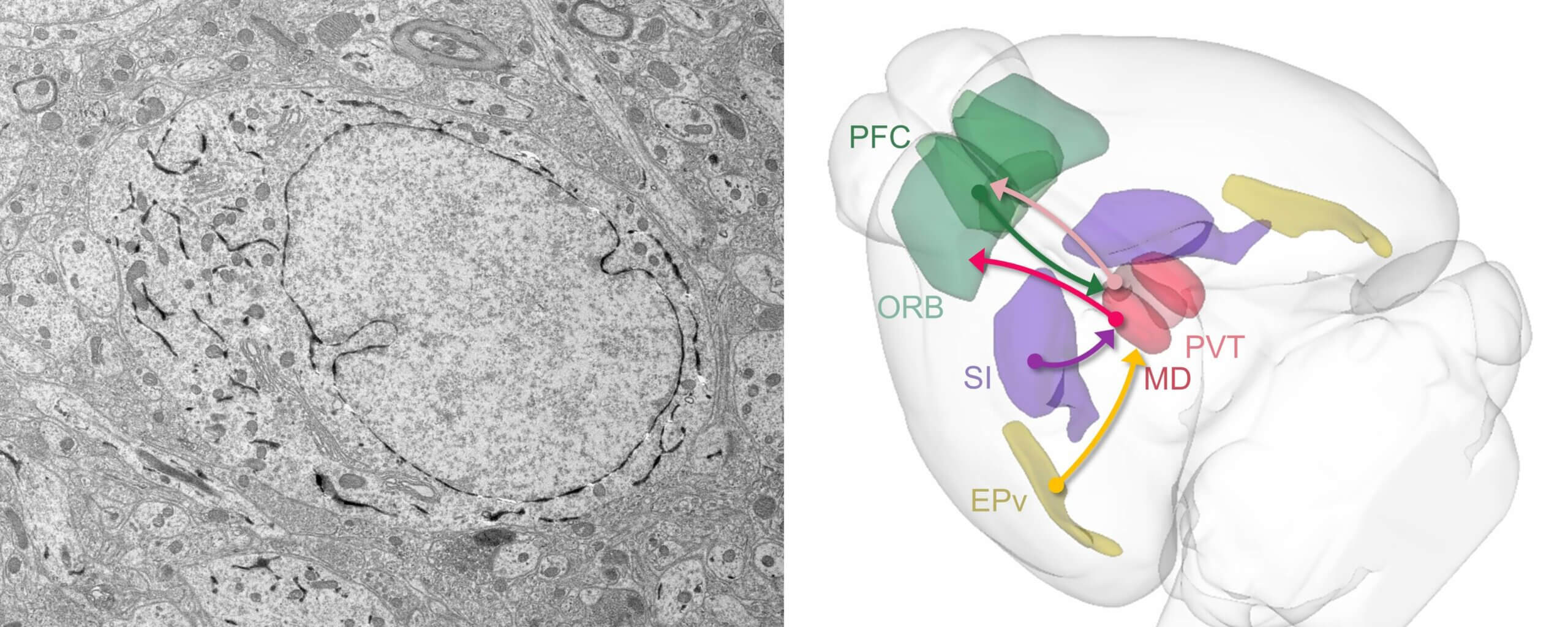

Cell Types & Learning

Investigating how specific brain cell types change their activity during novelty and learning using calcium imaging and spatial transcriptomics.

Dynamic Routing

Studying how the brain flexibly reroutes neural activity during task-switching in mice.

OpenScope

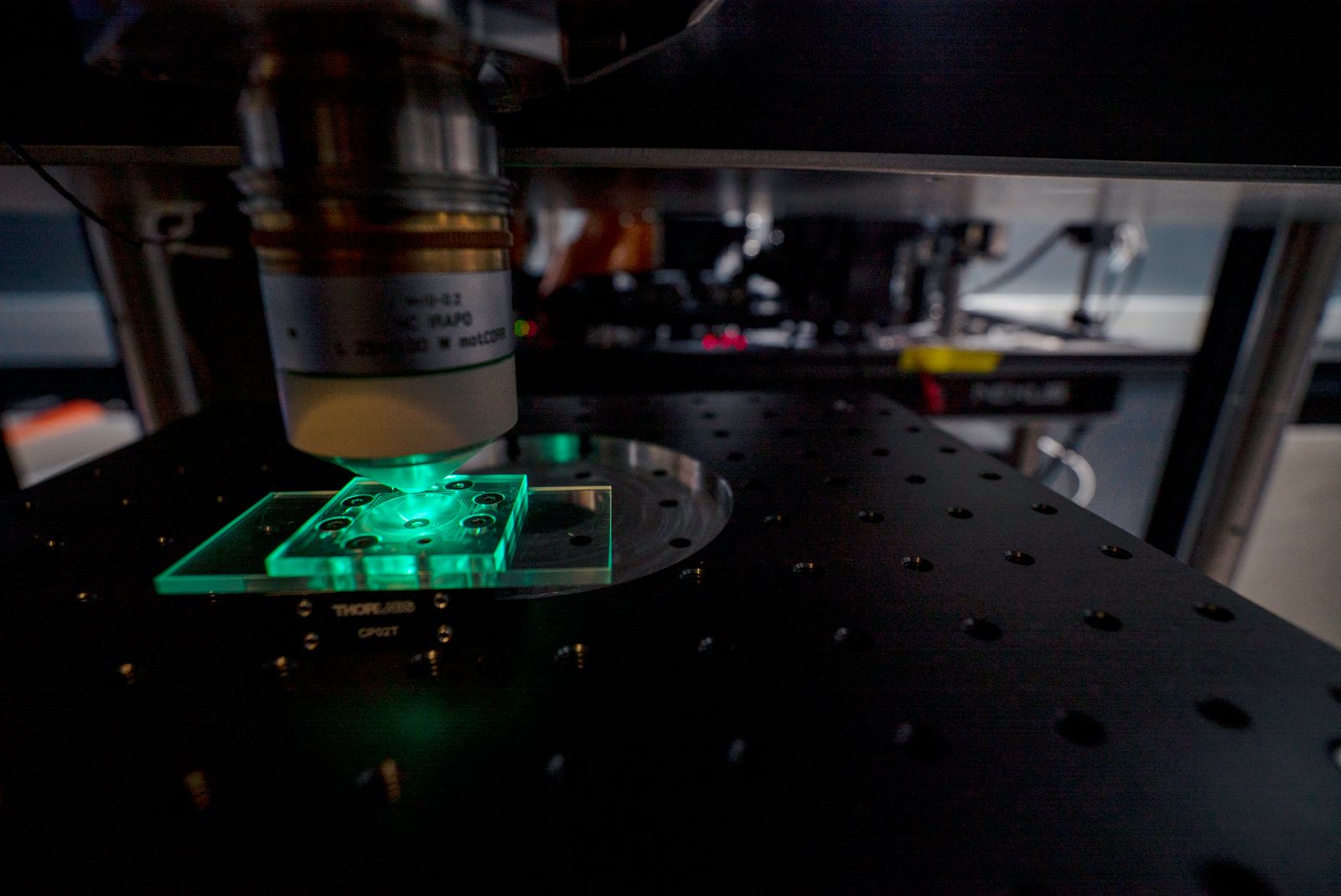

A shared neuroscience platform where global researchers propose experiments that are executed using advanced tools at the Allen Institute.

Single-Cell Computation

Exploring how individual neurons compute by tracking synaptic inputs and outputs at single-synapse resolution in living brains.

Brain-Wide Neuromodulation

Examining how different neuromodulator neuron types influence learning and decision-making across brain networks.

Credit Assignment During Learning

Using brain-computer interfaces and optical mapping to understand how the brain updates synapses during learning without disrupting existing knowledge.

neural dynamics news

Sculpted by evolution, the brain’s amazing, complex neural circuits produce our behavior within the world. We aim to discover how information processed by networks of neurons generates our thoughts, emotions and actions.

Block Quote

neural dynamics publications

access open tools, brain atlases, neuroscience databases, and more

neural dynamics events

neural dynamics scientific advisory council