in this article

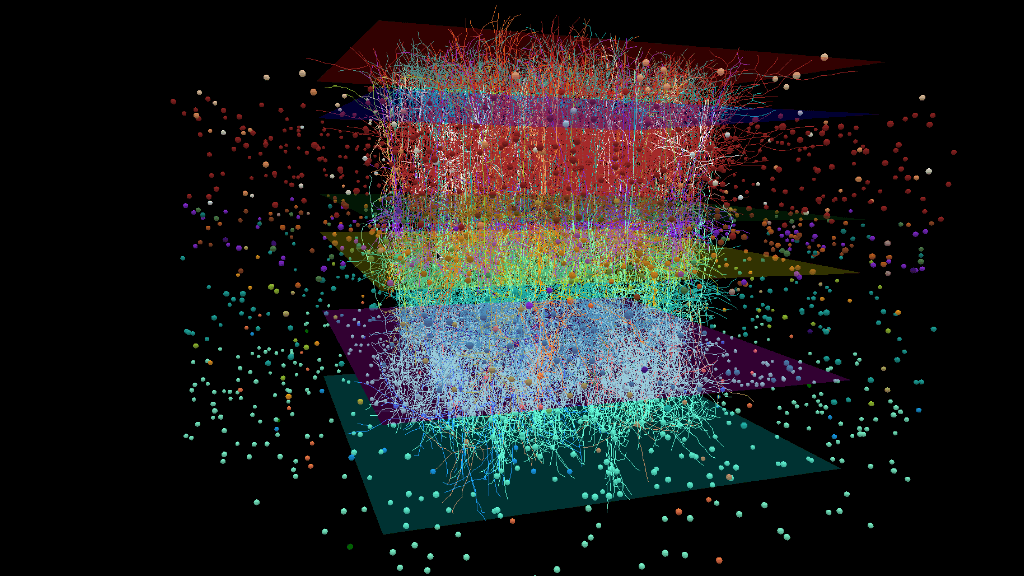

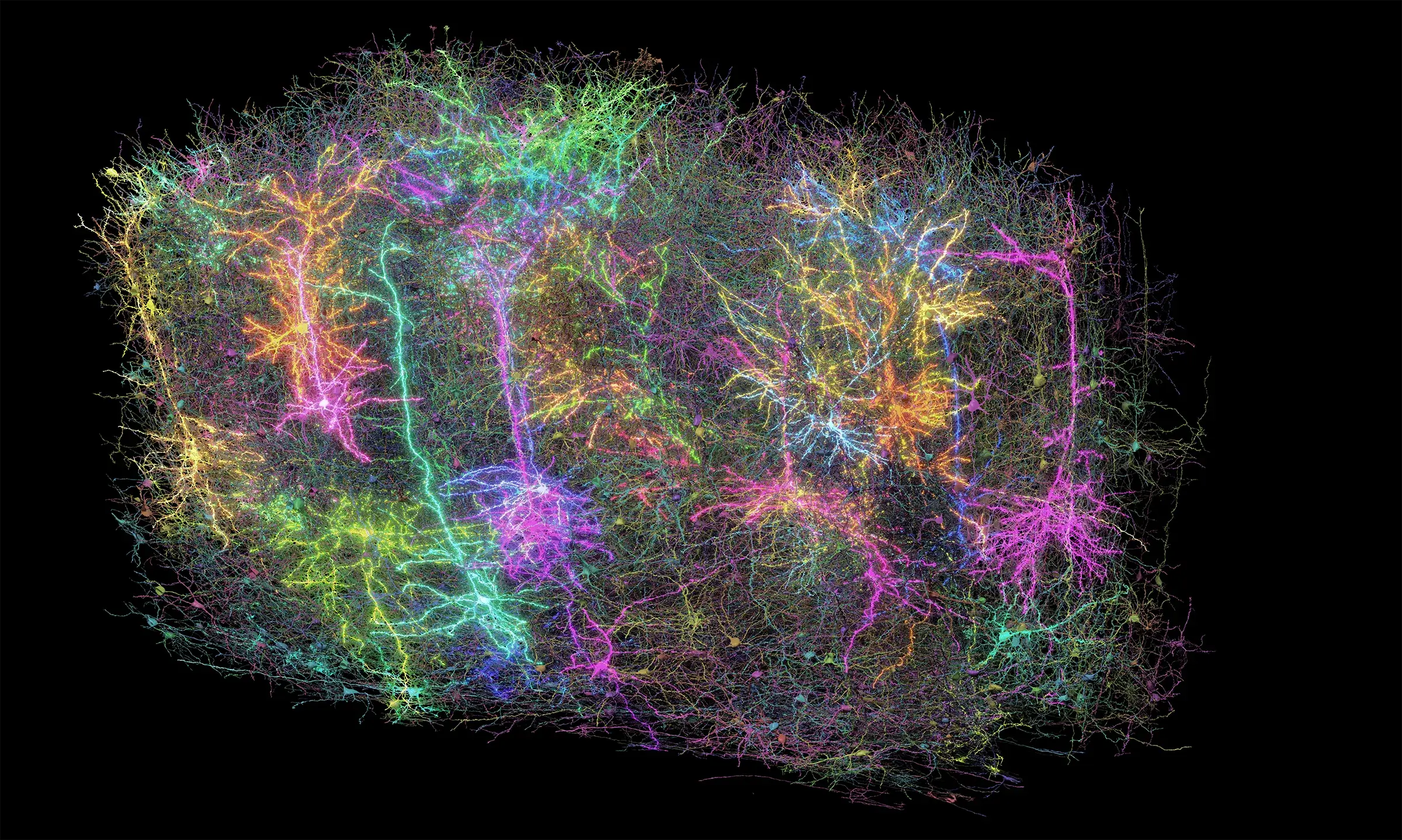

A team of computational neuroscientists at the Allen Institute have unveiled their largest virtual brain recreation to date: A computer-based simulation of the part of the mouse brain that processes what the animal sees, containing 230,000 lifelike digital neurons. The team published an article describing the new model, which is openly available for others in the community to use, in the journal Neuron earlier this month.

This virtual piece of brain aims to simulate what happens in an animal that’s engaged in its surroundings, meaning the computational neuroscientists can run “experiments” with their digital brain much as scientists do with real mice in the lab. The model was built in part using data from the Allen Brain Observatory, which captures information about neuron activity in the visual part of the mouse brain as animals see different natural images and movies — including the opening clip from the Orson Welles classic movie, “Touch of Evil.”

The simulation includes both the piece of brain itself, an area known as the primary visual cortex, and the brain pathways that feed from the eyes to that region. That means that the biologists who built it can show their model the same movies and photos that the lab mice see — including the film noir clip, translated into lines of code — and watch how the virtual brain responds. This will enable them to make predictions about what’s happening in real brains, possibly drawing connections that would be difficult or impossible to make in lab experiments.

That the model faithfully mimics the real (or cinematic) world is a unique approach, said Anton Arkhipov, Ph.D., a computational neuroscientist at the Allen Institute for Brain Science, a division of the Allen Institute. A handful of other groups are building large-scale simulations of different parts of the mouse or rat brain, but theirs is the only one that can watch movies — or more broadly, respond to lifelike events.

“For us it was important to look at what happens in realistic conditions, so we can compare apples to apples with the real animal,” said Arkhipov, who led the recently published study. “We think it’s a critical thing to do if you want to connect modeling to the real world.”

Lego-like connections built from data

Arkhipov and his colleagues first developed more than 100 models of individual neurons that they then stacked together, Lego-like, to build the larger mega-model. The information that fed into the single-neuron models came from the Allen Cell Types Database, which describes the attributes that make each brain cell type unique from its neighbors.

This work expands on a smaller model the team published in late 2018, which recreated part of one layer in the layered primary visual cortex. The new model includes both more types of neurons and all the layers of the primary visual cortex. The researchers then ran virtual experiments on their creation.

“Modeling can let you understand the why behind what you observe,” said Yazan Billeh, Ph.D., a former neuroscientist at the Allen Institute and lead author on the paper describing the model. “You have the opportunity to do experiments you can’t do in real life. You can delete connections between groups of cells, or you can add new connections, or you can eliminate one type of cell and ask, what happens?”

The model spat out predictions about the strength of connections between certain neuron types and between the neurons that respond to objects moving in different directions. One of their predictions has already been independently tested, and confirmed, by scientists at University College London. That’s an essential part of the process, the Allen Institute researchers said. Computational work can narrow the field for the more expensive, more time-consuming lab experiments, but it can’t replace them.

“At the end of the day, the model is just a prediction, and it has to be verified,” Billeh said.

Open resources for others in the community to use related to this project include:

- The model of the mouse primary visual cortex, for anyone to run their own brain simulation

- An openly available pre-print of the paper describing the model

- A standard file format, SONATA, to represent large-scale neuroscience network models, developed in collaboration with the Swiss Blue Brain Project and other teams

- The Allen Cell Types Database, which includes information about mouse brain cells’ gene expression, 3D shape and electrical activity

- The Allen Brain Observatory, which captures the activity of tens of thousands of neurons in the mouse visual system.

Citations

about the allen institute

Allen Institute is a 501(c)(3) nonprofit medical research organization dedicated to accelerating science for a healthier world. Through large-scale, multidisciplinary research initiatives, the Institute generates foundational knowledge, data, tools, and models that are shared openly with the world to advance our understanding of life and health. Founded by Jody Allen and the late Paul G. Allen, Allen Institute is supported primarily by the Fund for Science and Technology.